TAMLY ai salesplatform

Designing an AI platform for non-technical users means every capability needs a human interface. For Warmly TAM, that meant translating enrichment agents, ML scoring models, and buying committee detection into workflows a sales rep could configure in minutes.

Role:

Lead Product Designer

Activities:

Information Architecture, AI-native UX, Scoring Model UX, Prototyping

The Problem

Sales teams don't have a data problem. They have a signal problem. Modern CRMs are full - contacts, companies, deals, activities. The data exists. The prioritization doesn't. The average sales rep spends 65% of their time on non-selling activities - manual research, list-building, and guessing which accounts are worth pursuing. The core problem: sales teams couldn't answer the question that matters most - who do I go after, and why now?

My Role

I designed Warmly TAM end-to-end as the lead product designer - from CRM onboarding through the AI enrichment agents, buying committee finder, and scoring system.

What I owned:

•Information architecture across a multi-entity data model: accounts → contacts → buying committees → AI-enriched fields → intent scores

•AI-native UX: how non-technical sales reps configure agentic workflows without writing code

•Scoring model setup experience - making a machine learning model feel approachable to a sales rep

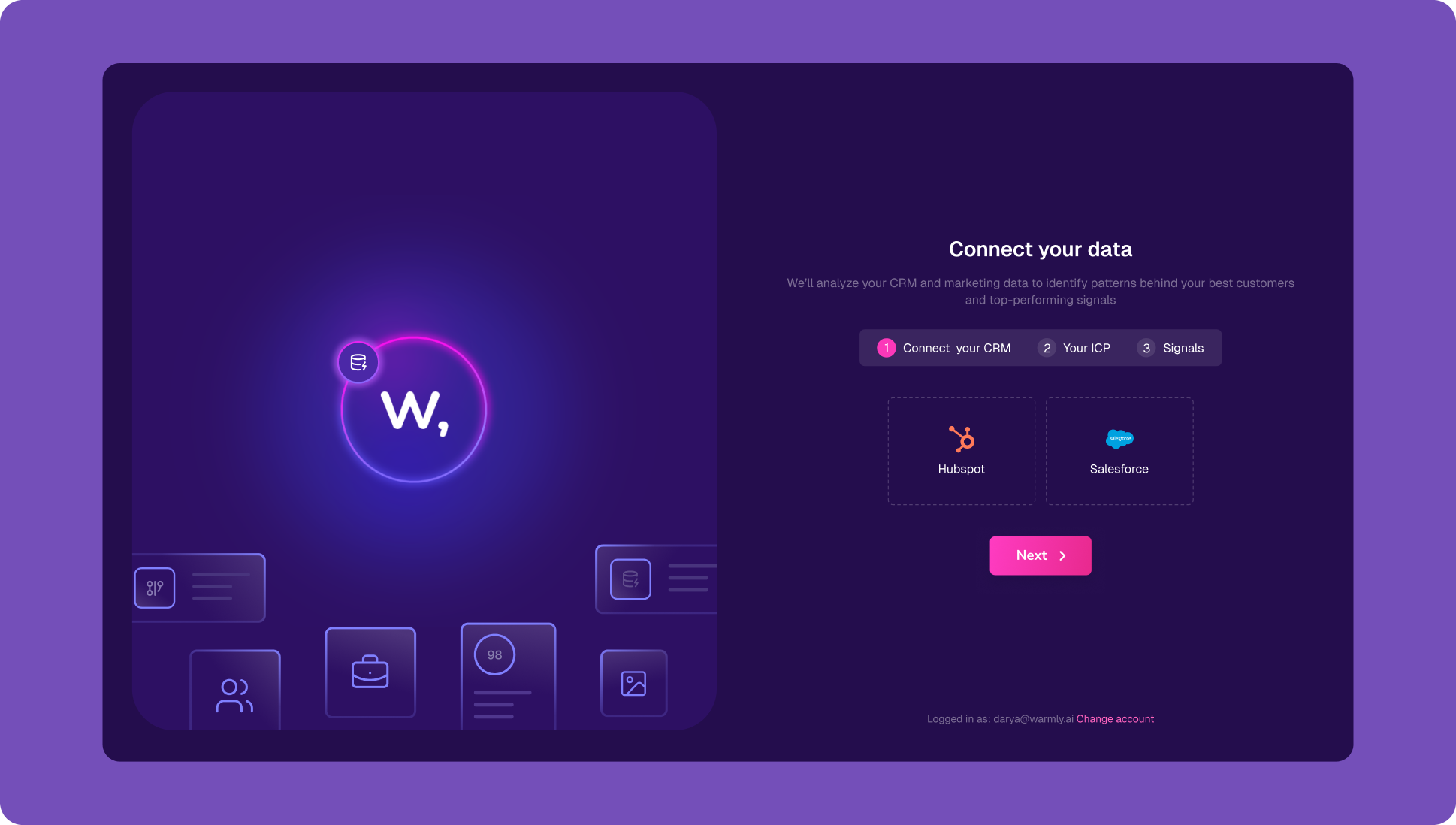

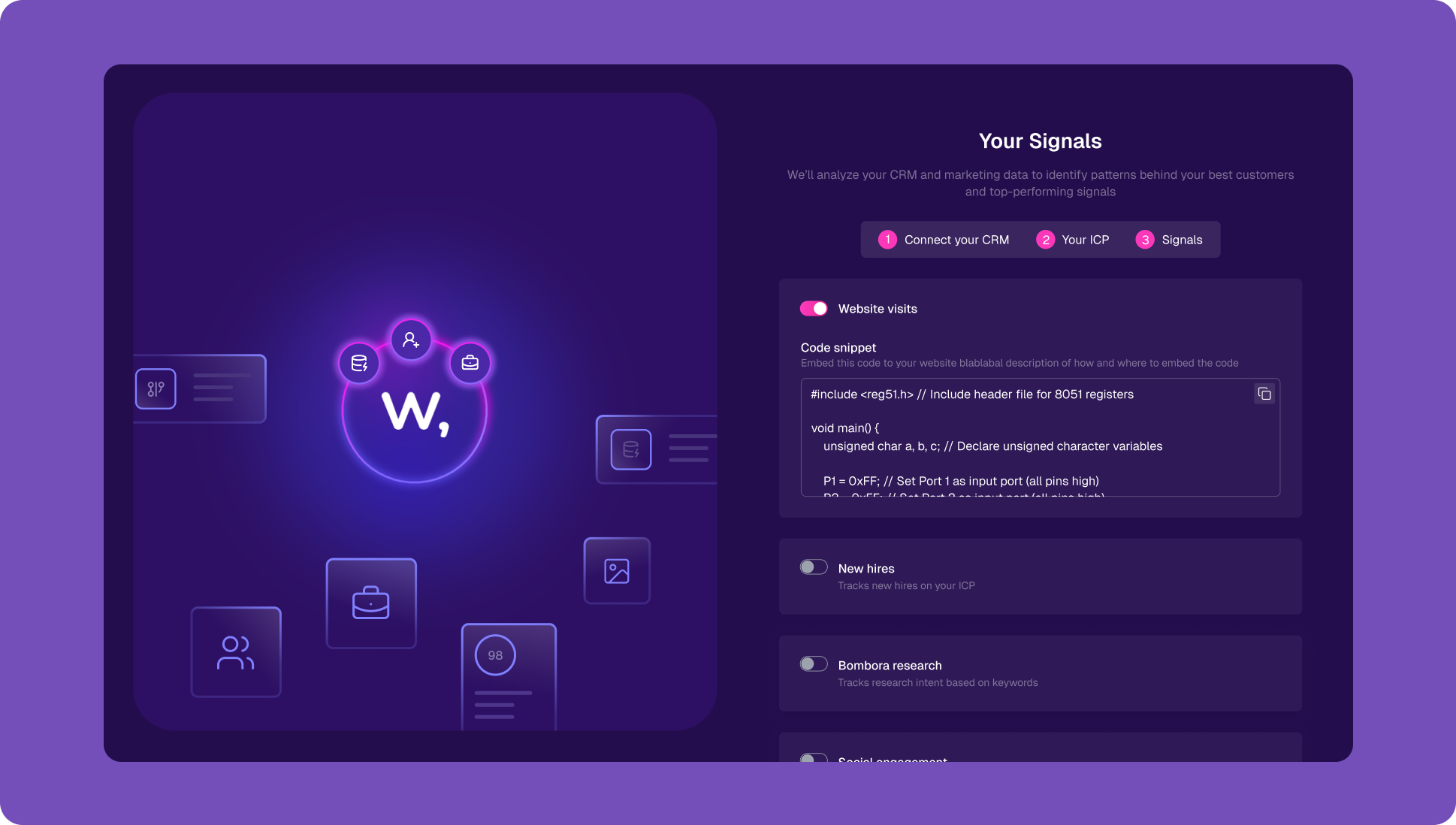

Onboarding

From zero to AI-ready in 3 steps

Before the AI can do anything useful, it needs data. The onboarding had to bridge a critical gap - getting users to connect their CRM without it feeling like an IT integration task.

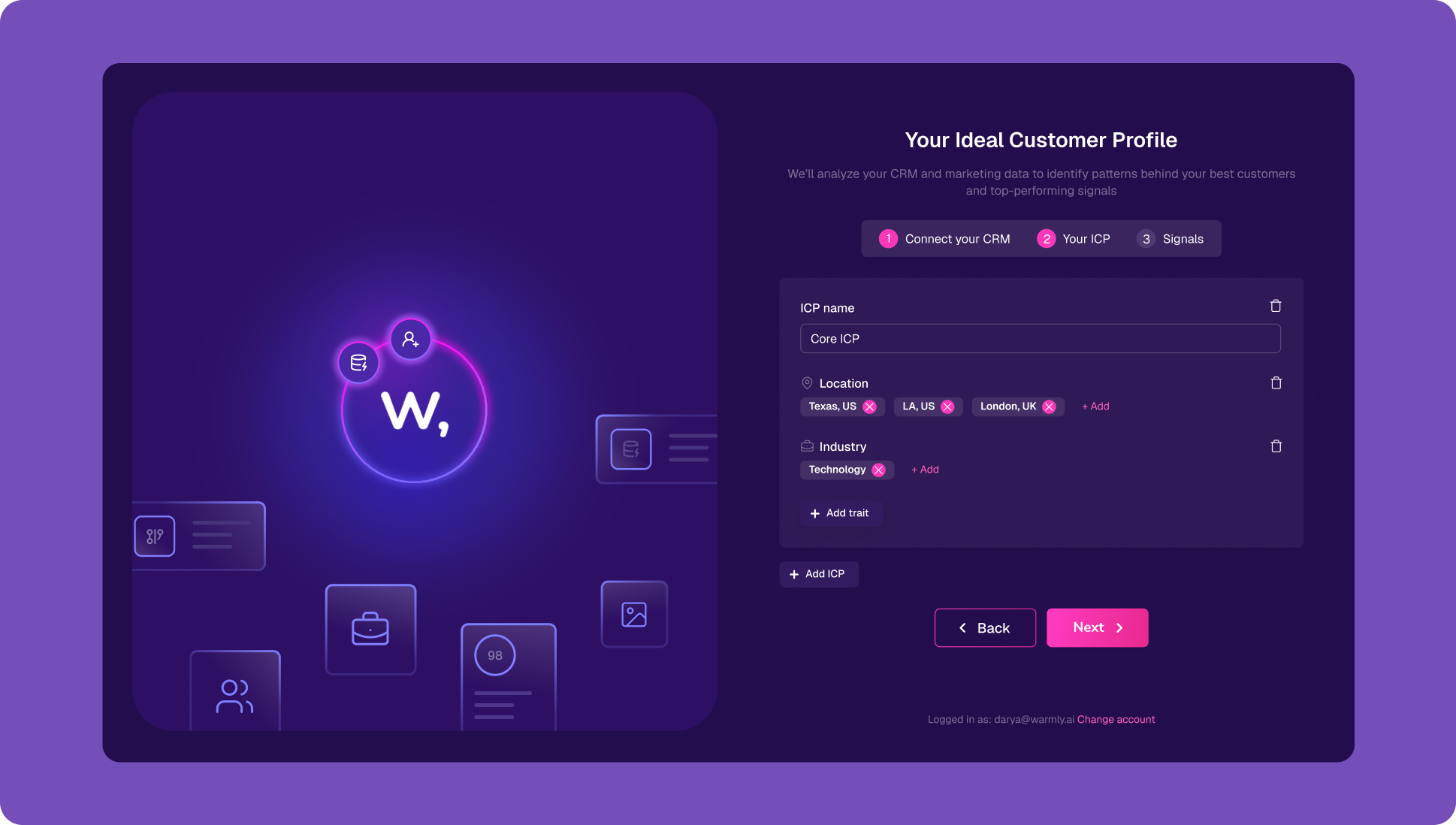

TAM + Dynamic Audiences

From noise to organized, living lists

The concept wasn't novel - segmenting accounts into lists is standard practice. What I redesigned was the maintenance burden. Audiences in TAM are criteria-based: you define the filters once, and any new entrant is automatically evaluated and placed. Lists stop being static snapshots and start reflecting reality.

Key design decisions:

•Audience name = human label, not a filter slug. Users name their audiences like they think about their customers: "Core ICP", "Strategic ICP", "Marketing ICP"

•Dynamic badge on save: makes the always-updating behavior visible and reassuring, not hidden

•Filter → count update: as each filter is added or removed, the matching company count animates to its new value - communicating that the audience is computed in real time, not frozen

Enrichment Agents

Configuring AI without writing code

Research is where deals stall. The enrichment agent addresses this directly: a single prompt triggers a search across public sources and Warmly's internal databases, returning results as a column attached to any audience. Build it once, reuse it everywhere.

The trust question mattered here. I chose source attribution over confidence scores or tooltips - showing where the data came from, not how sure the system is. Provenance over abstraction.

Buying Committee Finder

From a company to the right people inside it

B2B deals don't close with companies - they close with specific people inside companies. But most CRMs have account data and contact data in separate silos, with no intelligence about which contacts matter for a given deal. The buying committee finder had to solve two problems in sequence: classify existing contacts by persona, then surface who you're missing.

Buyer personas: name your persona (Champion, Economic Buyer, Technical Evaluator), define include/exclude titles, add special instructions. Drag to rank by priority. As many personas as needed.

Test panel: pick any company → instantly see which existing CRM contacts match each persona and which would be net new.

The TAM column view shows the buying committee inline per account so users can quickly scan available data and focus on the most complete one.

Run log records every enrichment: company, matched contact, persona, confidence %, last run timestamp. Confidence range: 98% for strong title matches, 75% for adjacent roles, 12% for weak matches.

Row states in the table: Normal → Processing → Reprocessing (user-triggered) → Cancelling → Failed. Each state has distinct visual treatment so users know what the system is doing per account.

Scoring Agent

A machine learning model that a sales rep can set up in 3 clicks

The next logical step was to enhance the system with a score - one that could take all available signals and data per account and contact and answer a single question: who do I prioritize? The design challenge was making a machine learning model feel owned and configured by the user, not handed to them as a black box. At the same time, the configuration UX couldn't require any data science knowledge.

Feature selection panel

The scoring model is built by selecting signals from 543 available CRM features.

Live benchmark panel

Shows the before/after impact of the model being built from the user's own CRM history - alongside the feature selection, in real time.

Insights tab

Post-model view showing cluster analysis. The system identifies which customer types perform best.

Microinteraction

Score hover card. Hovering an account opens a rich card - not a tooltip - with full deal intelligence: expected value, win probability, sales cycle estimate, conversion window. This is the moment the product delivers on its core promise.

Outcomes

returned within the first week

We launched the TAM Agent platform in beta and spent the next six months refining it. The core retention signal we tracked: how many users came back versus how many checked it once and left. 71% returned within the first week - a strong signal that the platform was delivering enough value to pull people back.

One pattern stood out in the usage data - prompt quality was low. Users weren't spending time crafting good prompts, which directly limited what each agent could return. That became a fast follow-up: a lightweight prompt improvement flow that made it easy to write better inputs. Users who engaged with it got noticeably richer outputs across every agent they configured - enrichment, buying committee, scoring.

Reflection

What I'd do differently: The enrichment agent creation flow is two explicit steps (Field Details → Update Policies). In testing, many users wanted to skip Update Policies entirely and just run the agent. In hindsight, I'd explore a "quick create" path that defers update policies to after the first run - let users see the value first, configure the automation second. The current flow optimizes for completeness over first-run momentum.

Open design problem: The scoring model's transparency debt. The system shows users what the model predicts but not why a specific account scores the way it does. The cluster analysis in Insights gets close - but individual account score explanation is something I'd want to explore in V2.